构建游戏平台时,服务器应具备哪些关键特性?

- 行业动态

- 2024-08-07

- 7

针对搭建游戏所需的服务器,可以发现选择适合的服务器对确保游戏体验至关重要,小编将依据多维度的要求,详细介绍适用于游戏搭建的服务器的关键指标和配置需求:

1、服务器位置选择

地理位置:服务器的地理位置对于游戏体验有着直接的影响,理想情况下,服务器应置于离用户群体较近的地理位置,以减少延迟,提高访问速度,国内用户应优先考虑使用国内的服务器。

2、线路与带宽要求

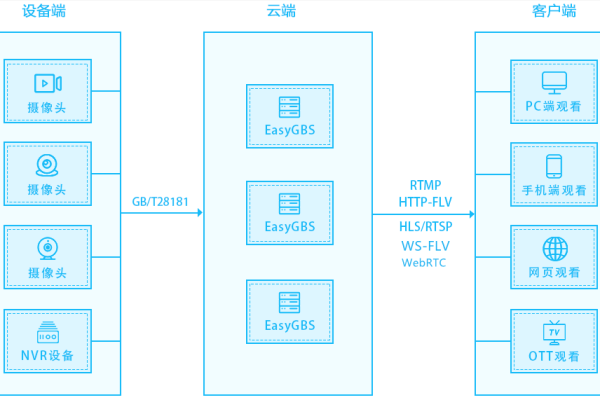

优质线路:机房线路的质量直接影响到游戏的稳定性和流畅度,BGP线路是一个较好的选择,它能提供更稳定和流畅的网络环境。

足够带宽:游戏需要消耗大量的带宽,特别是在玩家数量激增时,保证有足够的带宽是确保游戏流畅性的关键。

3、性能与弹性考量

高性能硬件:游戏服务器需要能够快速处理大量数据,支持高并发操作,高性能的CPU、大量的RAM和高速SSD是必需的。

资源弹性拓展:游戏高峰期可能需要更多服务器资源,而在非高峰期资源可以被释放,选择支持自动伸缩资源的服务,如云服务器,能更好地应对这种需求。

4、配置与升级选项

初始配置:基于当前玩家数量和预计增长,选择适当的起始配置是必要的,这包括了CPU的核心数、RAM大小以及存储空间等。

升级可行性:随着玩家基数的增长,初期的配置可能无法满足需求,选择一个可以灵活升级的配置或服务商是十分重要的。

5、成本与预算规划

成本控制:服务器租用或购买的成本是必须考虑的因素,根据游戏的规模和需求,从经济实惠的低端配置到高端配置均可选择,价格范围可以从每月几百元到数千元不等。

6、安全性与稳定性

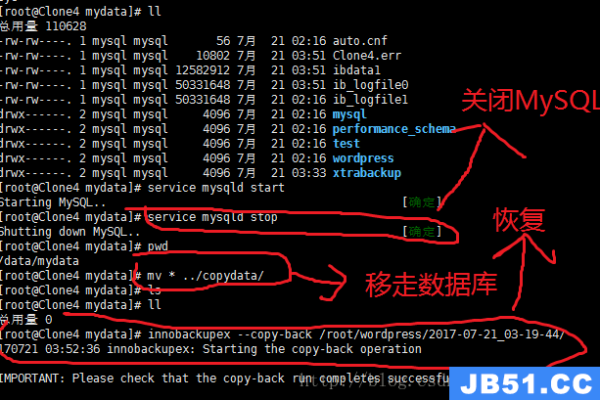

数据安全:保障游戏数据的安全是运行游戏不可或缺的一环,包括防止DDoS攻击、数据加密和备份等措施。

稳定性保障:确保服务器的稳定性对于维持良好的用户体验至关重要,选择有良好口碑和高质量客服服务的服务器提供商是明智的选择。

在选择适合游戏的服务器时,理解游戏的具体需求并预测未来的扩展是非常关键的,服务器的选择不仅仅是关于硬件的性能,也包括了解其在全球或特定地区的网络连接质量,以及在高峰时段保持游戏稳定运行的能力,通过精心选择服务器,可以确保游戏为玩家提供一个低延迟、高稳定性的优质游玩环境,从而吸引并保持更多的玩家。